Structural Invariants

Over the past phase of development, I've focused deliberately on the cognitive core of our larger intelligence system rather than expanding features or surface capabilities. This core is responsible for orchestrating multi model dialogue, coordinating independent oversight processes, sequencing events, enforcing cost boundaries, and governing when reasoning must stop. It is intentionally small in scope and tightly controlled. Instead of adding complexity, we concentrated on proving that the system behaves correctly under stress. The goal was not to produce more compelling dialogue, but to ensure that the underlying cognitive process is durable, bounded, and structurally coherent before extending it into broader applications.

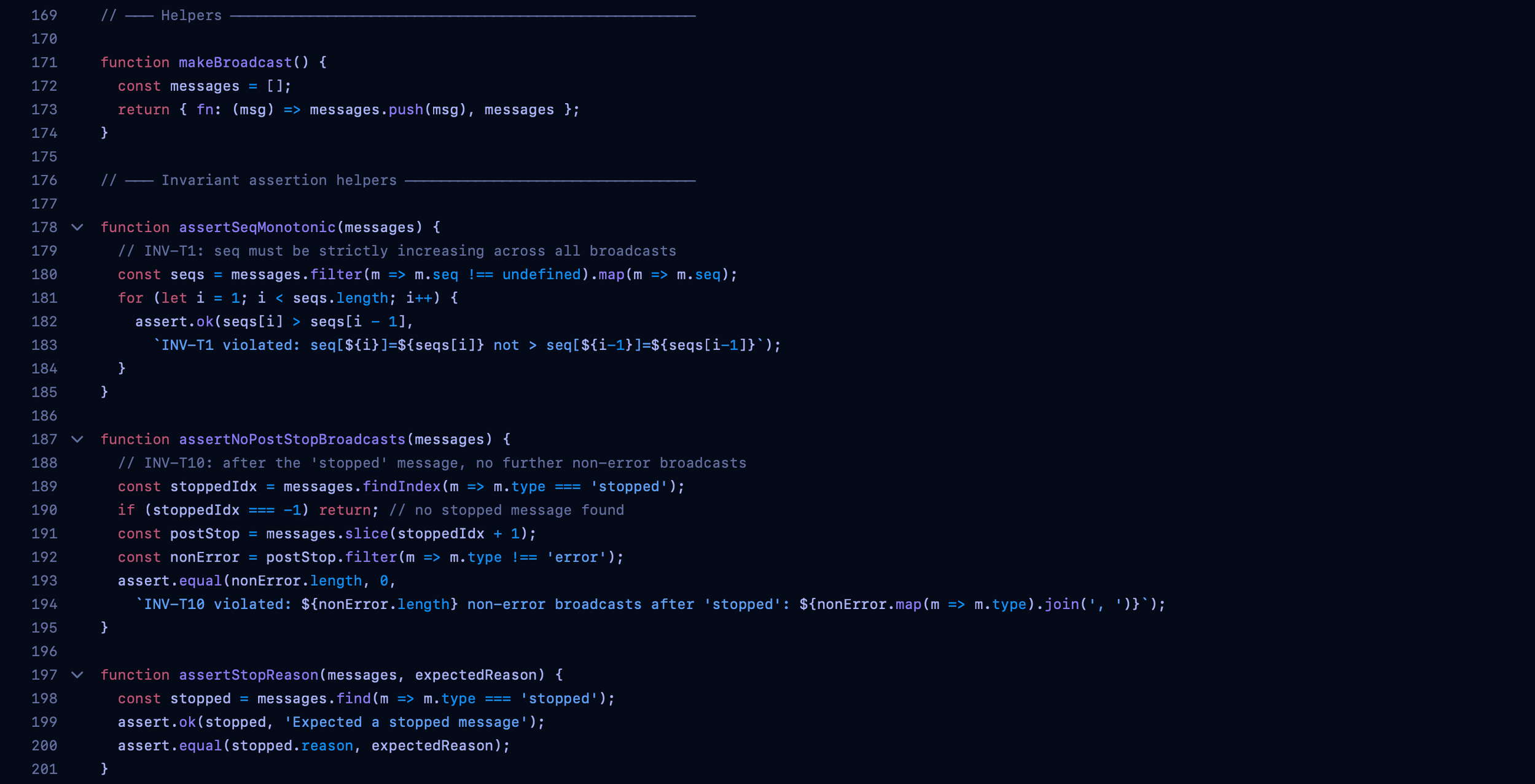

The central realization guiding this work is that a multi agent language model system behaves less like a chatbot and more like a distributed process. Dialogue generation, auditing, narration, heuristic detection, and user intervention all operate asynchronously. Each introduces the possibility of race conditions, state drift, misordered events, or silent governance failure. Traditional output based testing cannot detect these structural risks. We therefore shifted to invariant driven validation. Rather than asking whether the responses look good, we asked what must always remain true regardless of content. Event sequences must be strictly monotonic so sessions can be replayed deterministically. Turn counters must match transcript entries so stop conditions and audit scheduling cannot misfire. Cost accumulation must reconcile exactly with per turn expenditures to prevent floating point drift from triggering premature termination. Adaptive token ceilings must remain bounded so optimization never erodes governance constraints.

A system that cannot guarantee ordering, bounded cost, lifecycle correctness, and replay integrity cannot safely scale into persistent agents, local model orchestration, or research grade deployments.

We also simulated adversarial timing conditions. The system was stopped during active model calls, during detached audit resolution, and during narrator execution. Extensions were triggered while audits were in flight. These tests were designed to expose interleavings that only appear in asynchronous systems. The objective was to confirm that oversight locks release correctly, that no ghost broadcasts occur after termination, that failure counters reset after recovery, and that no internal state becomes inconsistent when cancellation interrupts execution. These properties are invisible when they hold, but catastrophic when they break. Validating them establishes that the cognitive layer behaves like a governed runtime rather than a loosely coordinated loop.

This work may appear mundane because it produces no new interface, no improved prompt, and no visible leap in model performance. Yet it is foundational. A system that cannot guarantee ordering, bounded cost, lifecycle correctness, and replay integrity cannot safely scale into persistent agents, local model orchestration, or research grade deployments. By proving structural invariants before expanding outward, we are deliberately constructing a stable cognitive kernel. The larger system we envision depends on this discipline. Intelligence infrastructure must be engineered, not improvised.